16-09-2021

– Kubernetes, also known as K8s, is an open-source system for automating the deployment, scaling, and management of containerized applications – the humble definition of Kubernetes from kubernetes.io doesn’t do justice to its actual role in the container management space today. What was behind its creation, what role does it play today in IT, especially in DevOps?

The beginnings of the most popular „conductor” of containers

Before we begin our adventure with Kubernetes, let’s consider what it was created for and what it means to be a container orchestrator.

Orchestrator

Like the conductor of a symphony orchestra, it decides when – in this case, the container – will be started and at what point it will end its work.

Packaging applications into containers is becoming very fashionable and also very convenient. The microservices concept or applications independent of each other encourages the development of a continuous delivery approach. It’s also an ideal situation.

However, let’s imagine that we have one machine on which the runtime environment for containers is installed – let’s say Docker – and we just want to add another one, because we lack resources. We also remember that we’ll remove the machine as soon as the load decreases.

Just now an application has been deployed. It does not switch on properly, and users want to use the system without any problems. Downtime is created, that destroys user comfort.

Managing containers in a changing environment are challenging without a system that will keep an eye on all operations so that the end-user does not suffer from service unavailability.

These, and more problems, were noticed by Google engineers at home. That’s why they developed the Borg orchestration systems, and later – unsuccessfully – Omega. In 2014, however, their expertise allowed them to release a revolutionary open-source solution called Kubernetes.

The steadily growing power of Kubernetes

Kubernetes has grown in popularity because of its flexibility and scalability for container management tasks. It works with virtually any type of container running environment and type of underlying infrastructure – be it public cloud, private cloud, or local servers.

Kubernetes has been battle-tested over many years, based on solid architecture. It manages more than 2 billion containers per week at Google – probably the Biggest such platform in the World.

There’s also something that builds its significant advantage over other systems of its kind – support by the community and, of course, the business. Nearly 78 percent of companies have chosen Kubernetes to orchestrate their architecture.

Architecture

Kubernetes is a collection of containers running in a given environment (e.g. Docker).

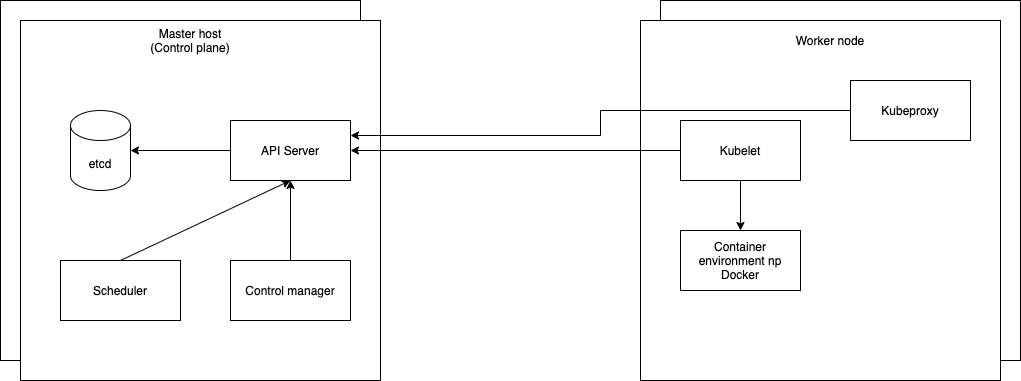

Cluster – machines with management (master nodes) and execution (worker nodes) roles.

A cluster consists of machines that have a management role (master nodes) and an execution role (worker nodes).

- API Server – a REST API server used to communicate between the user and other system components within the cluster.

- Kubelet – communicates with the API Server and is responsible for managing containers on a given node.

- Controller Manager – is responsible for performing key operations on the cluster.

- Scheduler – assigns objects – applications – to appropriate nodes.

- Kube-proxy – is responsible for network communication with nodes.

- Etcd – a distributed key-value database for storing cluster state.

Advantages of implementing K8s:

- Efficient deployment of applications to environments;

- Automatic application restart in case of failure;

- Scalability of the solution;

- A set of all kinds of mechanisms ensuring high application availability during failure and deployment;

- Large community and the availability of the solution with most Cloud providers.

Disadvantages:

- Due to a large number of facilities, knowledge at the beginning of the adventure with K8s is required.

Installing k8s locally:

https://minikube.sigs.k8s.io/docs/start/Installing on bare metal (on-premise):

https://github.com/kubernetes-sigs/kubesprayCloud:

- GCP:

- AWS:

- AZURE: